Something broke in how I used AI this year.

I stopped writing prompts and started building workspaces.

Memory that survived sessions. Knowledge bases that got smarter on their own. By month three I could not remember the last time I typed a question into a chat box and waited for an answer.

That is the shift nobody is summarizing properly, and it is the only thing about 2026 you need to understand.

Every trend you are about to read is part of the same thing. Agentic coding. Open-source agent frameworks. Karpathy's LLM wiki. GraphRAG. "Ragless" systems. Grok sitting inside every X thread you scroll past. They sound like five different stories because they shipped on five different weeks. They are one story.

AI stopped being a thing you talk to. It became a thing you build around.

Once you see it that way, the rest of 2026 makes sense in a way it did not a year ago.

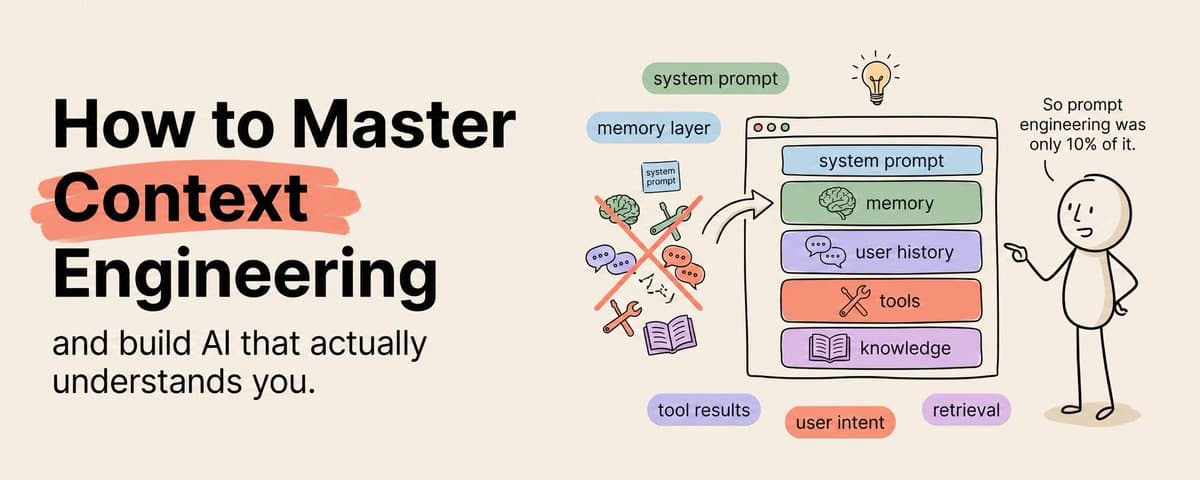

The year prompts stopped mattering

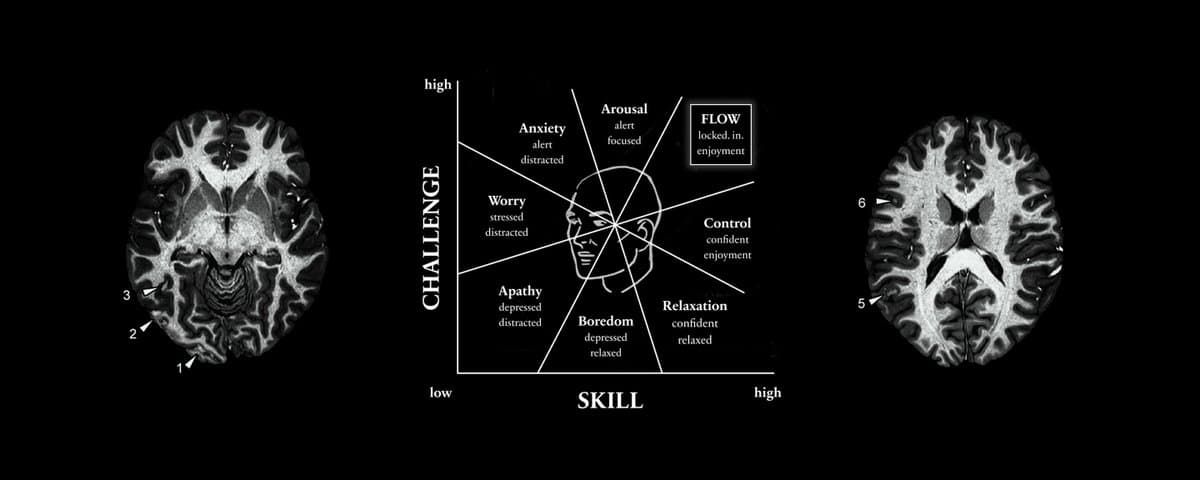

Two years ago the winning skill in AI was prompt engineering. You learned the right phrasing, the right persona, the right chain of thought, and you got better outputs than the person next to you. That skill had a half-life of maybe eighteen months.

What killed it was not a better model. It was the realization that prompts are the thinnest, most volatile layer of the stack.

Context is the thickest. Context is what the model sees before it starts generating. Your project files. Your past conversations. Your tools. Your style guide. Your codebase. Your domain knowledge. Everything you would whisper in a new hire's ear on day one.

The teams winning in 2026 figured out that whoever owns the context layer owns the output quality. Prompt engineers became context engineers. And context, unlike a clever prompt, is an asset that compounds.

Everything else in this article is what "owning the context layer" actually looks like once you start building it.

The shift to agentic coding

Claude Code was the canary.

When it first landed as a CLI tool, most people treated it like a fancy autocomplete. Paste some code, ask for a fix, move on. That framing missed the point by two orders of magnitude.

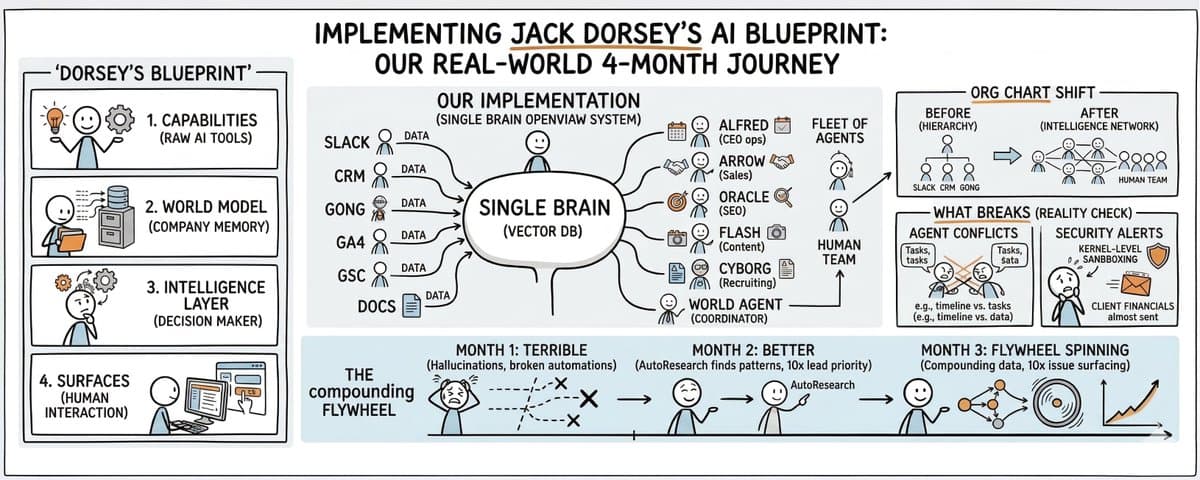

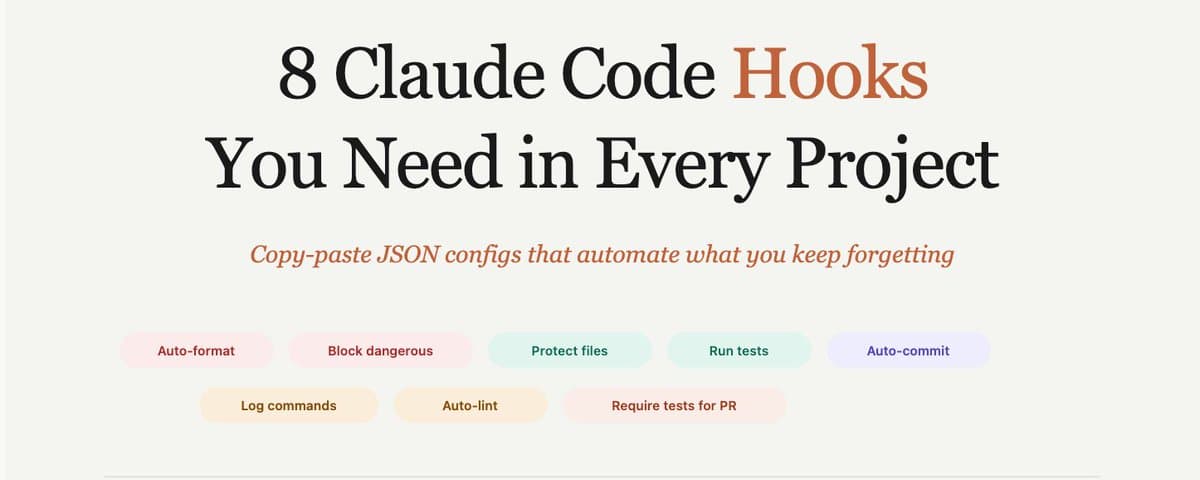

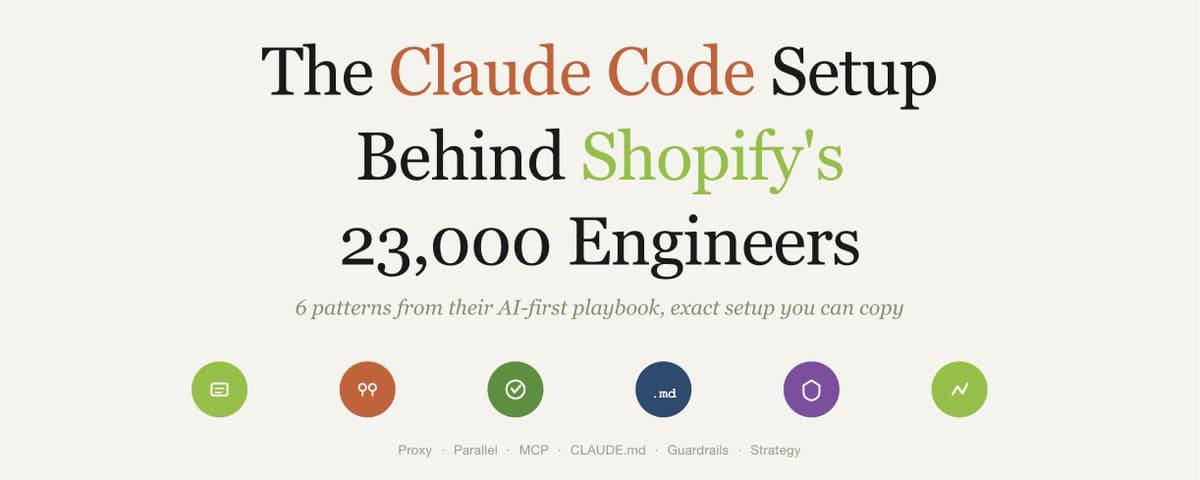

The real shift was that Claude Code did not try to be a typing assistant. It tried to be a coworker. A project file that remembered the codebase. A permission system that asked before touching production. MCP integrations that let it read from databases, run tests, spin up parallel instances against the same branch. The pattern reads less like GitHub Copilot and more like hiring a junior engineer who already knows your stack.

I ran parallel Claude Code sessions on my own backend for the first time in January. One session fixed a bug in the retrieval layer. Another worked on a new pattern detection pipeline. A third wrote the integration tests for both. I reviewed diffs, not keystrokes.

That day ended with three merged branches and a realization. My job stopped being "write the code." It became "describe the goal, set the guardrails, review the work."

That is the delegation layer the industry spent two years pretending did not exist. In 2026 it stopped being a demo and started being a workflow.

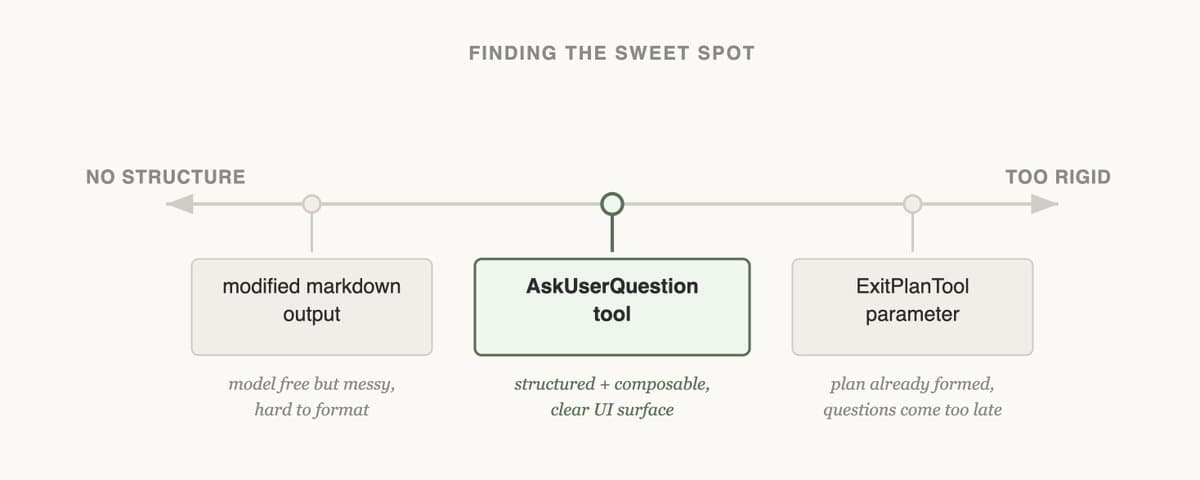

The reason Claude Code became the reference point is that it surfaced every hard problem nobody wanted to solve. How do you manage context over a four-hour session without the agent forgetting what it was doing? How do you let it touch real systems without nuking production? How do you compact history without losing the thread of a long refactor? Every serious agentic coding tool that shipped after it is a different answer to the same four questions.

Cursor, Copilot Workspace, the newer Devin releases, the SWE-agent academic line. Different answers, same questions. That is how you know a category has matured.

Open-source agents grew up

The open-source side of this story is the part most people undersell.

OpenClaw is the cleanest example. When it first launched, the framing was "an agent that plugs into your chat apps." Cute demo, nothing more. By mid-2026 the release notes read like infrastructure engineering. HSTS headers. SSRF policy changes. External secrets management. Cron reliability patches. Multilingual memory embeddings. Multi-model routing. Thread-bound agents so conversations in Signal do not cross-contaminate with conversations in Telegram.

That list is not exciting. That list is exactly what you need to run an agent in production without it becoming a security incident.

The maturation pattern here matters more than the specific features. Open-source agent projects stopped chasing the next benchmark and started shipping the boring infrastructure that makes an agent survive a real user base. The demo era was 2024. The demo-to-prod era was 2025. 2026 was the year open-source agents had to answer the same compliance questions a B2B SaaS product answers in its second round of funding.

Hermes 3 sits at the model end of the same trend. Nous Research positioned it as a frontier open model with long-term context retention, multi-turn conversation, internal-monologue capabilities, and agentic function calling, trained primarily on synthetic data fine-tuned over Llama 3.1 sizes. You do not train a model that way unless you assume it is going to get wrapped in an agent loop and left running.

Read the two together. OpenClaw is building the harness. Hermes is training the model that expects to live inside one. They are different parts of the same bet: that the next wave of open-source AI will be agents, not chatbots, and the teams winning will have invested in the plumbing long before anyone else thought it mattered.

If you are an engineer choosing what to learn next, this is the unglamorous answer. Spend a weekend reading the OpenClaw release notes end to end. You will learn more about what production AI actually looks like than from any paper you read this year.

Knowledge bases that compound

Andrej Karpathy did something quietly radical in March.

He posted a thread describing his personal knowledge system. Raw source material goes in. An LLM compiles it into an interlinked markdown wiki. Every time something new comes in, the wiki gets updated, cross-referenced, compressed, pruned. Secondary writeups say one of his research wikis hit around 100 articles and roughly 400,000 words. A book's worth of knowledge, maintained by the AI itself, queryable at the speed of thought.

That post broke the brain of every prompt engineer on X for a week.

The reason it mattered is not the specific setup. It is the mental model. Karpathy reframed AI from a transient assistant into a compounding research partner. The reusable asset stopped being the prompt and started being the maintained knowledge layer around the model.

This matches what I have been building for my own work. A memory layer with decay, deduplication, contradiction handling, pattern detection. A content corpus that gets richer every time I add a new source. A style guide the model learns from by reading my past outputs. None of that is prompt engineering. All of it is context engineering. And all of it compounds, which means every week I put into it makes every future session better without any additional work from me.

The deepest implication of the Karpathy thread is that durable context is starting to look more valuable than clever prompting. A prompt is good for one response. A well-maintained knowledge base is good for every response you will ever generate from that point forward. That is not a small difference. That is the difference between writing a letter and building a library.

If you have not started your own version of this, start now. A shared folder. A note-taking app with good tagging. A weekly ritual where you drop everything interesting you read into one place and let an AI sort it. Six months from now you will be the person your team quotes.

Beyond naive RAG

The retrieval trend is the one most commonly miscommunicated on X.

"RAG is dead" is a bad read. Naive RAG lost credibility. There is a difference.

Naive RAG is the pipeline you built in 2023. Chunk your documents, embed them, drop them in a vector database, retrieve the top-k matches, stuff them into the prompt. It worked well enough for demos. It fell apart the moment anyone tried to use it on a serious knowledge base with contradictory sources, temporal changes, or queries that required reasoning across multiple documents.

In 2026 the conversation split into three camps.

The first camp kept RAG and got serious about it. Better chunking strategies that preserve semantic boundaries. Rerankers that understand query intent instead of keyword overlap. Hybrid search that combines BM25 with vector similarity because neither is enough on its own. This is the camp doing the unglamorous engineering work, and it is where most production teams ended up.

The second camp went structural. GraphRAG was the flagship idea. Instead of treating your knowledge as an unordered bag of chunks, you extract entities and relationships, build a graph, and let the model reason over the structure. It solves problems naive RAG cannot touch, like "what connects these two papers that never cite each other?" The tradeoff is complexity. Graphs are expensive to build and maintain, and most teams discover halfway through that their knowledge does not need graph reasoning in the first place.

The third camp went ragless. Not in the sense that retrieval disappeared. In the sense that you pre-compile and maintain a structured knowledge layer the model reasons over directly, instead of doing live retrieval every time. Karpathy's wiki is the canonical example. The model does not chunk-and-retrieve when you ask a question. It reads a maintained document that was designed to be read. The retrieval happened at write time, not at query time.

All three camps are right about different things. Improved RAG is right for most production teams with structured corpora and clear query patterns. GraphRAG is right for research and analysis where relationships matter more than documents. Ragless is right for personal knowledge and high-leverage domains where you can afford to maintain the corpus manually and reap compound returns.

The wrong answer is picking one and declaring the others dead. The builders who ship in 2026 are the ones who understand that retrieval architecture is a design choice, not a religion.

The convergence nobody is naming

Here is the thing most of the takes on X are missing.

Agentic coding. Open-source agent frameworks. Karpathy-style knowledge bases. Beyond-naive RAG. Grok sitting inside every X thread you scroll past. These all look like separate trends. They are the same trend from five different angles.

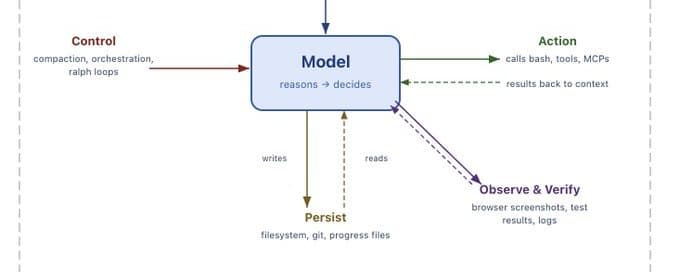

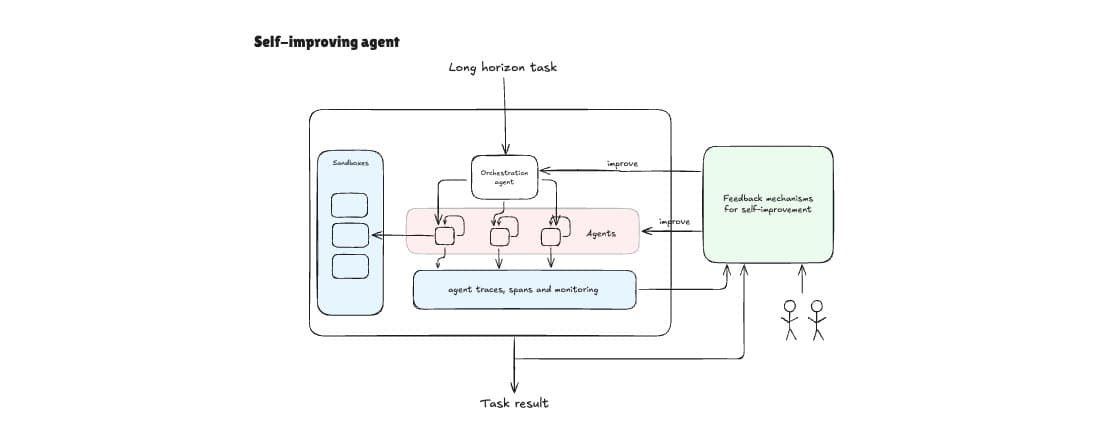

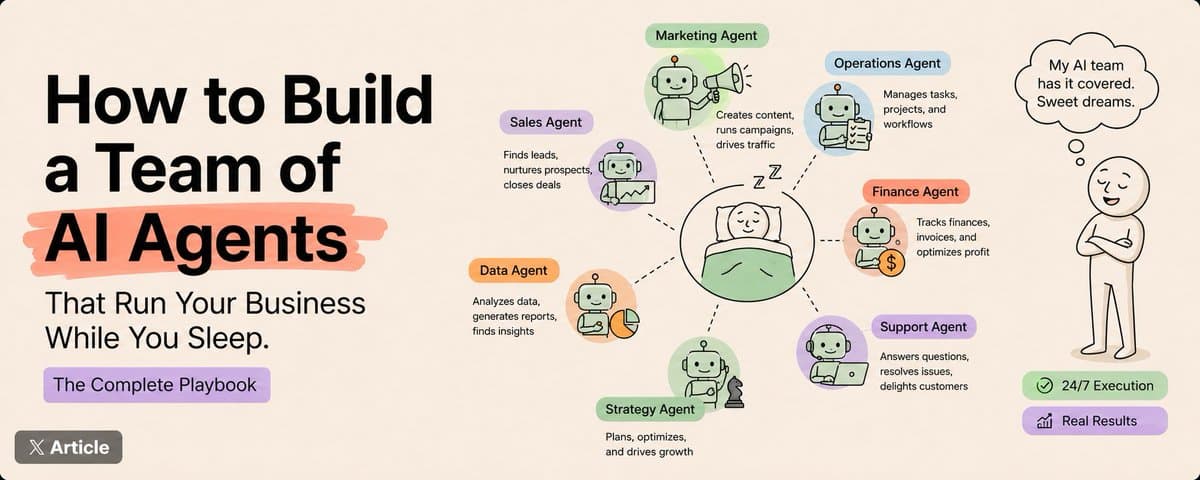

The unifying shape is this: AI became workflow-native, persistent, and tool-using.

Workflow-native means the AI lives inside the actual work, not in a separate chat window. Claude Code inside the terminal. OpenClaw inside Signal. Grok inside the X search bar and every reply you post. You stop context-switching to talk to the AI. The AI shows up where you already are.

The X product surface is underrated as a signal here. Grok did not win by being the smartest model. It won by being the model that was already in the app you were scrolling. Summaries appear next to trending topics. Replies get AI-assisted drafts. Search gained a reasoning layer. Platform behavior itself became the distribution channel for AI. That is a template every consumer product is now copying, and it is why the "where does the AI live" question is starting to matter more than the "which model is best" question.

Persistent means memory survives across sessions. Not in the shallow "ChatGPT remembers your name" sense. In the deeper sense where the model knows what you were working on last week and picks up where you left off. Hermes 3's long-term context retention. Claude Code's project files. Your knowledge wiki. The retrieval layer you maintain. All of these are different ways of answering the same question: how does the AI remember anything?

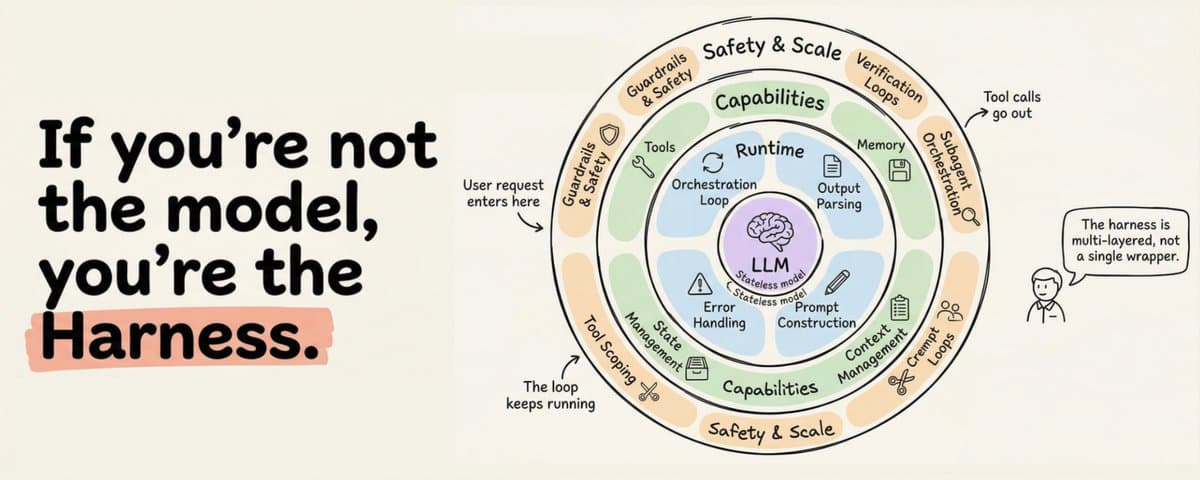

Tool-using means the AI can do things instead of just saying them. MCP integrations. Permission pipelines. API calls. File edits. Database queries. The model is no longer the bottleneck. The harness around it is.

Stack those three together and you stop having chatbots and start having coworkers.

This is why every serious AI team in 2026 is obsessing over the same set of problems. Context management. State persistence. Tool reliability. Error recovery. Permission systems. Observability. None of these sit inside the model. All of them sit in the layer around it.

If you are an engineer, this is the most important thing to internalize. The model is commodity. The harness around it is not. The team that ships in 2026 is the team that figures out how to build that harness before their competitors do.

What this means for you

You have been reading this thinking one of two things.

Either "I need to pick one of these trends and go deep." Or "this feels like too much, I will wait until the dust settles."

Both are wrong.

You do not need to pick one. You need to pick a workflow you actually do every week and wrap every one of these trends around it, one at a time. If you write code, start with agentic coding. Then add a knowledge base for your stack. Then build a retrieval layer for your past PRs. You will find that each trend makes the next one easier, because they are all pieces of the same machine.

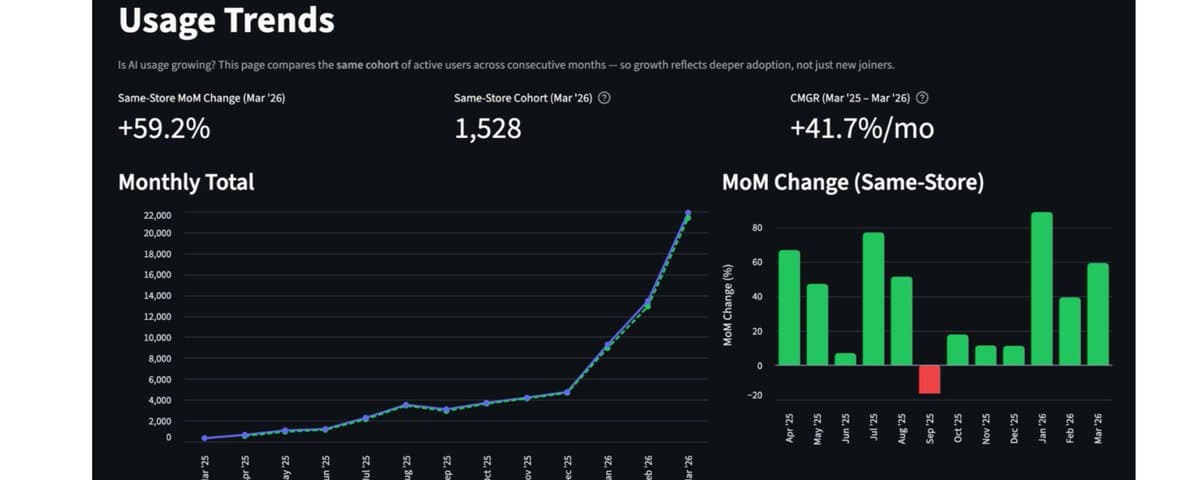

And you cannot wait for the dust to settle. The dust is not going to settle. The teams building now are going to have a year of compounding context advantage by the time anyone feels ready to start. That is not a prediction. That is what compounding does. You already know this, you just have not applied it to AI yet.

Start small. Pick one workflow this weekend. Give the AI a project file and a memory layer. One tool it can touch. Run it for a week and see what happens.

A month from now you will be looking at the person still typing prompts into a chat window and wondering how you ever did it that way.

That is what 2026 is. Not a better model year. Not a benchmark year. A year where the people who stopped talking to AI and started building around it ran away with the next decade of leverage.

The rest of this year is about whether you join them now or catch up later.

![I Searched the Whole Claude Skills Ecosystem - These Are the Ones That Matter [Full GitHub Links] thumbnail](/_next/image?url=https%3A%2F%2Fpbs.twimg.com%2Fmedia%2FHJh-jWJXQAQ210M.jpg&w=3840&q=75)

![How To Use Markov Chains To Win Every Single Trade + [Quant Framework] thumbnail](/_next/image?url=https%3A%2F%2Fpbs.twimg.com%2Fmedia%2FHJFJI4sXYAA_RPR.jpg&w=3840&q=75)

![I FOUND 1,116 CLAUDE CODE SKILLS FROM 500+ REPOS SO YOU DON'T HAVE TO. [ALL LINKS] thumbnail](/_next/image?url=https%3A%2F%2Fpbs.twimg.com%2Fmedia%2FHGo8LJ6WAAAEJTa.jpg&w=3840&q=75)