Most companies are still debating their AI strategy. They are overthinking it. Here is the playbook we followed to get everyone at the company building using AI.

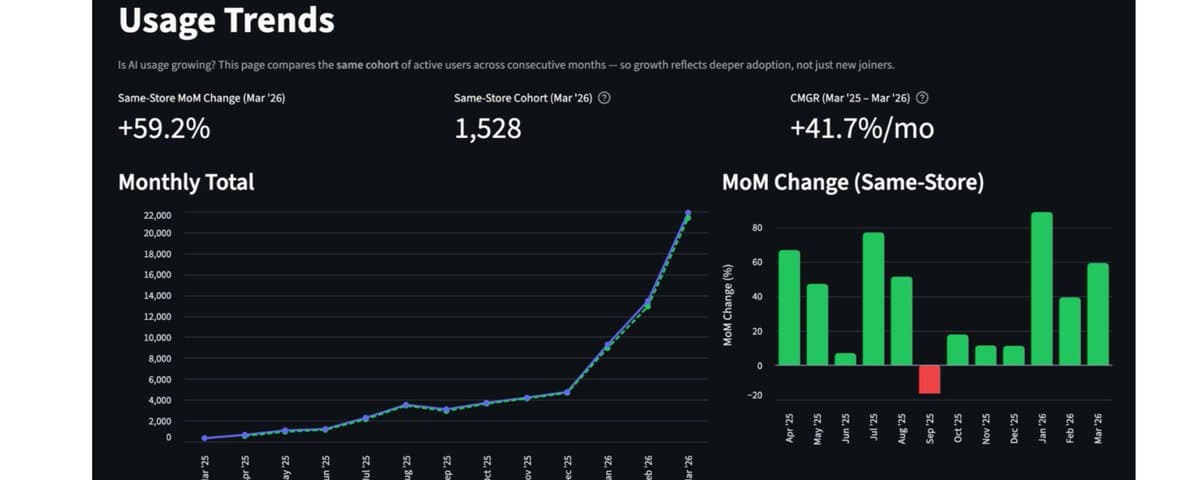

Ramp's AI usage is up 6,300% from last year. 99.5% of the team active on AI tools. 84% using coding agents weekly. 1,500+ apps shipped on our internal platform in six weeks, from 800+ different builders. Non-engineers now account for 12% of all human-initiated PRs on the production codebase - thousands per month - using Ramp Inspect, our home-built coding agent.

We did this by being obsessed over every employee embracing this new technology - akin to how computers entered the workforce. We built our own Claude Cowork called Glass that gives anyone at the company a highly configured AI agent that is fully connected with Ramp's systems and aware of how we build. We hosted the largest AI hackathon ever - 700 participants across sellers, CX, legal, marketing, finance, coached by 100 of our most capable engineering and product teammates. They shipped more in a week than we previously could in a year.

We modified our hiring process and talent management process. We gave everyone unlimited budget to build, learn, and explore. We created leaderboards to incentivize usage. We reorged teams around those who see the future. We celebrated wins at all hands. We relentlessly pushed every single person and leader to build. And the result is far beyond what I could ever imagine.

Here's how we got here. The interesting part isn't the numbers or the tools. It's that we didn't have a plan. All we had was a culture and talent, and we kept doubling down on the things that were working with the people and technology in front of us. And watched it compound.

1. The second best time to start is today.

At Ramp, our culture is velocity. It shapes every process and team ritual. That culture turned out to be the single biggest accelerant for AI adoption.

At our January 2025 company kickoff, we told the whole company we would become the most productive company in the world. We believed we could do it given Ramp's culture. We had no idea how.

We started with the obvious:

1. Leadership clarity that AI usage is an expectation

1. Dedicated AI "guild" that were responsive to any questions

1. Slack channels where teams can share what they built

1. Dedicated all hands time to celebrate builders

1. Mandated AI usage and tracking for everyone

There was no formal change management program. No mandatory training curriculum. Instead, we built the infrastructure for people to teach themselves and each other. The reality is that teams just need to be given a chance. Everyone wants to build. And with AI, anyone can.

2. Treat AI proficiency as a learning curve, not a light switch.

A year ago, most of us used AI the way everyone did. ChatGPT in a tab. AI search in Notion. Fine, but not transformational.

What we observed was that productive output leaps when people clear certain thresholds of comfort. Almost nobody outside of some exceptional engineers was operating at the upper levels before 2025. But in late 2025 and this year, we accelerated massively -- because we spent last year building a strong foundation first.

We think about AI proficiency in four levels:

- L0: Sometimes uses ChatGPT. Has not changed any workflows. If you're still here and not self-starting, you will most likely not be at the company.

- L1: Built custom GPTs, used Notion agents, dabbled in Claude Code. Starting to see what's possible but hasn't compounded it yet.

- L2: Built an app that automates part of their job. Committed code or contributed feedback to others' work. This is where things get real.

- L3: Systems builders. They don't just use AI -- they build the infrastructure that levels up everyone else. These people are force multipliers.

Our job is to get everyone up the ladder. Three things make that possible:

1. Build tools that meet people where they are. We started by shifting the whole company to Claude and Notion AI connected to all of our workplace tools -- a low technical bar where everyone could participate and get meaningful benefit. That got people from L0 to L1.

1. Raise expectations as tools mature. AI proficiency moved into hiring screens, onboarding, and how we talk about performance. Not as an end in itself, but as a stated expectation: getting good at these tools is essential to doing any job at Ramp well. That pushes L1s to L2.

1. Match the mandate to the tooling. If you raise expectations before the tools can deliver, you burn credibility and people stop listening.

3. Embrace creative destruction.

This is the part that makes Ramp exhilarating and uncomfortable in equal measure.

Many of the tools we shipped in January 2026 are already obsolete -- replaced by better versions, often from the same builders. We've gotten comfortable with a shelf life of weeks, not months. Every LLM update, every improvement to Claude Code or Codex harnesses, every new batch of skills we release reshapes what's possible. If your internal tools from three months ago still feel state-of-the-art, you're not moving boldly enough.

Our data democratization journey tells the story well:

- Phase 1: Notion AI was the best option, so we piped important data into Notion databases to run our agent over it.

- Phase 2: We launched Ramp Research, a Slack-based Snowflake research tool.

- Phase 3: As coding agents matured, we encoded Snowflake research into skills those agents could use directly.

- Phase 4: Now we're making data research interactive and self-improving.

Each generation opened doors the previous one couldn't. Each former generation was quietly sunset. The tools we're running right now? We genuinely hope they're obsolete by June.

From the outside, this looks chaotic. From the inside, it's the opposite. People aren't attached to their tools. They're attached to their problems. When a better way to solve the problem shows up, they grab it.

4. Build from the center, drive from the spokes.

We got the org design wrong before we got it right.

The initial instinct was to centralize: one small team builds tools for the whole company. Demand outstripped capacity almost immediately. Then we swung decentralized -- every team builds their own things. Tons of redundant re-learning.

The answer was to do both:

1. A small central team builds the platforms, connectors, and plumbing across LLMs, data, knowledge, and workflows. They also manage training, enablement, and change management.

1. Functional teams build on top of those platforms and give feedback that drives the central team's roadmap.

The results speak for themselves:

- A risk analyst automated 16 hours per month of manual financial modeling.

- A sales ops lead replaced a spreadsheet-based comp model across three orgs in 48 hours.

- An L&D lead built a training simulator in 15 minutes.

- Someone in finance built a contract reviewer that saves 45 minutes per contract -- and Ramp has a lot of contracts.

None of them are engineers.

These people didn't file a ticket. They found their own pain, prototyped a fix, and pulled engineering in when it was time to go to production -- when that was even necessary. The spokes drove the center as much as the center drove the spokes.

5. Give people a stage, not just a mandate.

Mandates decay. Culture is what remains.

The strategy, to the extent there was one, was to light as many small fires as possible and see which ones grew:

1. A Slack channel (#ramp-uses-ai) - just to see what happened. Now over 1,000 members. Spun off 40+ team-specific channels that collectively generate 20,000 messages per month.

1. AI office hours every Friday - regularly 40-50+ people show up with questions.

1. AI onboarding for new hires - rebuilt four times in the last year as ambition grew.

1. Dedicated all hands - we have everyone from our CEO to a first line operator demo what they have built with AI

The early converts mattered more than anything. On every team, there was one person - the ambitious sales ops lead, the frustrated product operator, the eager data scientist. They got curious, got sucked in, and became contagion for their teams. We made them visible: company All Hands spotlights, resources to build team-level tools, pairing them up when collaboration was warranted.

All this public building creates a competitive dynamic that everyone feels. Nobody wants to be the team that isn't building anything. When a CSM sees a risk analyst ship something that saves 16 hours a month, they don't think "good for Risk." They think "what can I build?"

That loop - build, share, inspire, build more - does more than any mandate or memo. The biggest surprise wasn't who built the most. It was how many people had been waiting for permission to build at all

6. Get people to the "Aha" moment as fast as possible.

Training doesn't work. Office hours and workshops help. But the world's best teacher is staring right in front of you: it's AI. You can only lead a horse to water. The single biggest unlock is getting someone to experience a real result on day one.

We learned this the hard way. Despite hitting 90%+ adoption of AI tools across the company, most people were stuck on a basic chat interface. The models were good enough. The harness wasn't. Terminal windows, npm installs, MCP configurations - these were simply too hard for the majority of people to grok. And the people who *did* push through had wildly different setups with siloed learnings that weren't compounding.

So we built Glass - our own version of Claude Code's Cowork, built on Anthropic's Claude Agent SDK.

Glass auto-configures on install. You authenticate once via Okta SSO and 30+ tools light up - Salesforce, Snowflake, Gong, Slack, Notion, Google Workspace, Figma. No setup guide. No ticket to IT. If the user has to debug, we've already lost.

A team of four built it in under three months. 700 daily active users within a month of launch. The people who got the most value weren't the ones who attended training sessions. They were the ones who installed a skill on day one and immediately got a result. The product taught them faster than we ever could.

When you own the tool, you see exactly where people get stuck and ship a fix the same day. Every session generates signal about how non-engineers actually learn to use AI - which skills get adopted, where people break through, what separates someone who uses it once a week from someone who uses it every day.

We also built a skills marketplace called Dojo where anyone can package a workflow and share it. Over 350 skills shared company-wide. A sales rep figures out the best way to analyze Gong calls and draft battlecards - packages it as a skill, and now every rep has that superpower. Every skill shared raises the floor for everyone.

The result is that anyone in 5 minutes can create anything.

7. Make it a competition.

People are competitive. At least this is true at Ramp.

We built an internal leaderboard that tracks AI usage across every team and individual at Ramp. Sessions run, skills used, apps shipped, tools connected. It's visible to everyone. The skeptics will tell you this is a vanity metric - that tracking usage incentivizes busywork, not productivity. We found the opposite.

The top AI users at Ramp are often the highest performers. AI proficiency is a skill like any other - the more reps you get, the better you become. The power users are developing muscle memory for when to reach for AI, how to prompt effectively, which skills to combine, and when to override. They're compounding their own leverage.

The leaderboard created three dynamics we didn't fully anticipate:

1. Healthy peer pressure. Nobody wants to be at the bottom. When you can see that your peer on another team is running 3x more sessions and shipping tools that save their team hours, you don't need a mandate to start building. You need your competitive instincts.

1. Manager accountability. Team-level rankings made it impossible for managers to ignore AI adoption. If your team is in the bottom quartile, that's a conversation you're going to have. It shifted AI from "nice to have" to "part of how we evaluate whether teams are operating at their potential."

1. Discovery through emulation. The leaderboard isn't just a scoreboard - it's a map. When you see someone at the top, you want to know what they're doing. You look at their skills, their workflows, their apps.

If you're not measuring it, you're not managing it. And if you're not making it visible, you're leaving the most powerful adoption lever on the table.

This extends to hiring and performance management. We now have an absolute requirement for anyone joining Ramp to be proficient with AI tools. No exceptions. For PM candidates, there's a dedicated interview session: build me a product, show me how you built it, walk me through how it works. It's a full-blown prototype, not a slide deck. If you can't demonstrate that you've internalized these tools, you don't clear the bar.

8. Remove every constraint between your people and AI.

The number one way companies kill AI adoption is by treating it like a procurement decision. Budget approvals. IT reviews. Token limits. Connector requests that sit in a queue for weeks. Every one of these is a wall between your people and their "aha" moment.

We took the opposite approach. Three things we did early that mattered more than almost anything else:

1. Treat AI usage as an infinite learning budget. If you demand ROI on every token before people have even learned to use the tools, you'll never get adoption. We gave people room to explore with the explicit expectation that the payoff comes from the compounding, not from day one.

1. Kill token limits and access restrictions. No caps on usage. No tiered access based on role. No "you're not an engineer, you don't need this." Everyone gets the same tools, the same models, the same access. The people who surprised us most were the ones we would have never given access to under a traditional approval process.

1. Remove every IT bottleneck on connectors. An AI agent is only as useful as what it can access. If your people have to file a ticket and wait two weeks for IT to approve a Salesforce connection or a Snowflake integration, they'll lose momentum and never come back. We pre-connected 30+ tools - Salesforce, Snowflake, Gong, Slack, Notion, Google Workspace, Figma - so that when someone opens Glass, everything is already live. One SSO authentication and they're working.

Here's the cost math that should reframe the conversation for any CFO: we pay our employees a lot of money. Token consumption per employee today isn't even close to double-digit percentages of their salary. But if someone is 2x more productive with AI, you should be willing to spend their entire salary again in tokens. If you have agents that can do 10x more work than a person, why would you not pay them twice as much as that person?

Watch it compound.

We didn't start with a better strategy than most companies. We might have had better starting conditions: a culture that rewards speed and initiative, people who try things without waiting for permission, a leadership team that backs bold bets because we know it's good for our customers.

In lieu of a master plan, we just started. We kept building tools, kept raising the bar, kept investing in data and AI infrastructure, kept creating venues for people to show off. Each track compounded separately. As they reinforced each other, the curve went vertical.

We are in the very early innings of AI. Your job as a leader is to give your teams superpowers and make them believe in themselves. Everything else follows.

The most important lesson is the simplest one: just get started.

*We're hiring builders across all functions. If you want to work somewhere that non-engineers ship production code, internal tools have a shelf life of weeks, and nobody thinks that's weird -- come build with us at Ramp.*

*Shoutout to @bleviathan for the collaboration on this piece. *